Re-watching ProperBird's presentation from Property Portal Watch Bangkok got me thinking... What are the rules around real estate listings data?

Real estate portals no doubt have some complaints about services like ProperBird scraping their content but surely agents aren't always thrilled about how portals use their listings data to make money and vendors can be shocked to see pictures of their living room on websites they've never heard of.

Looking through the small print and speaking to stakeholders. Essentially it looks like the use of the data contained in real estate listings is something of a grey area.

In early 2021 the U.S. agent community's Zillow hate flared up as it often does. On this particular occasion, the agent opprobrium was centred around the portal's updated terms and conditions and an article that claimed that the portal not only had the right to distribute agents' listings data but actually owned it.

Having been published by an agent-facing publication with the help of an agent-facing consultancy firm the article may have had an ulterior motive but it did raise an interesting point. What is the standard content relationship between listing agents and real estate marketplaces?

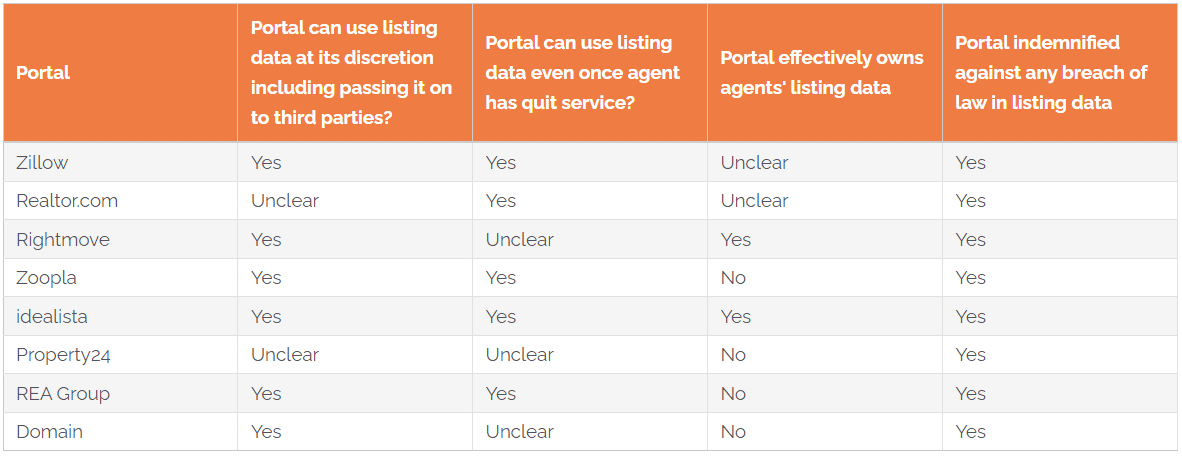

Having read through the Ts & Cs of the portals in the table above, it seems that...

Now I'm obviously not a legal expert so if any of the portals mentioned want to set me straight, please do reach out. The point is that real estate agents aren't legal experts either and if I'm left unsure after reading the fine print, they probably will be too.

Another poorly defined relationship is the one between real estate portals and third parties scaping their listings...

Portals have valuable, public-facing information and there are plenty of businesses out there that can turn that information to their advantage or even offer to scrape it for others.

While some portals, such as Zillow, explicitly prohibit the use of "any automated processes" to obtain information from their sites, scraping publicly available information is legal.

For as long as the internet has existed there have been programs that extract, or "scrape" information from web pages. Scraping, or "crawling" as it is also known, is how Google obtains all the information in its index and for that reason, the practice is never likely to be truly illegal.

When discussing the legality of web scraping, many experts point to a landmark ruling made by a Washington D.C. district court which concluded that using automated tools to access publicly available information, such as portal listings, is not unlawful.

According to David Reischer, CEO of LegalAdvice.com, scraping is just a technological advance that makes collecting information easier:

"It is not meaningfully different from using a tape recorder instead of taking written notes. Typically, the information a person scrapes is located in a public forum. Hence, when a scraper attempts to record the contents of public websites for research purposes, they are arguably affected with a 'First Amendment' interest."

Broadly speaking the types of companies that might want to scrape the listings information from a real estate portal fall into two categories: those that visually reproduce the same listings data on an individual basis and those that use the listings data on an aggregated basis without showing the listings themselves.

According to ProperBird CEO Lukas Rose, because his company only aggregates the listings data it scrapes for analysis purposes no portal has so far complained and the portals actually scrape each other to assess their competitive position all the time. Rose says that what ProperBird does is perfectly legal and scrapers are not breaking any rules "as long they are not simply backfilling another platform by reproducing the scraped information".

Companies that do reproduce scraped property listings data include aggregators such as Mitula and Trovit (both owned by the Japanese portal operator Lifull) which have internal teams dedicated to scraping listings from as many sources as possible. Historically they have also contracted specialist third-party companies to scrape listings from sensitive sources.

According to Mitula's former Chairman, Simon Baker, any portal's argument against aggregators is made null and void by Google.

“We often had interesting discussions with portals around scraping their content. The main concern of portals against scraping was that new sites can quickly aggregate content to create a competitive site. However, in essence, this is a false argument."

“Firstly, portals willingly want Google et al to scrape their content. They go out of their way to provide a myriad of landing pages in the hope of capturing free traffic. For many portals, they have actually built large businesses off the back of high quality SEO traffic."

“Secondly, aggregators such as Mitula and Trovit have built business model, similar to Google, around aggregating content and traffic and driving leads to the portals so the portals can then monetise them."

Baker claims that ultimately both the portal and its agent customers will benefit from aggregators scraping their listings in the form of increased traffic.

“Finally, whether a portal likes it or not, all content is available from many different sources. Stopping on aggregator from scraping a portals site means a competitor or a customer will benefit. This has two potential impacts, it increases the cost of marketing to generate traffic and it reducing the importance of the portal to its customers as they generate traffic from alternate sources.”

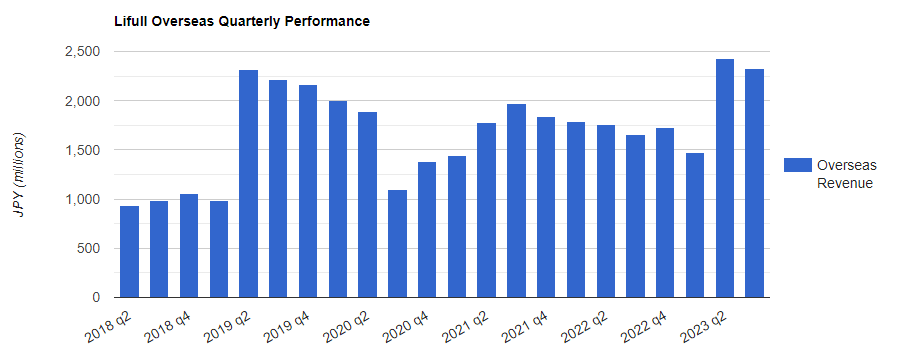

Traffic is a dying metric these days though. Portals are increasingly looking to lead quality rather than volume and Lifull admitted in its latest quarterly filings that its 'Overseas' business segment which houses its aggregation business has been impacted over recent years by portals decreasing the money they spend on traffic.

The struggle for aggregators now isn't getting the content it's making sure that they can enhance the listings data they get via scraping or a direct feed to continue to outrank portals and generate good quality leads.

There are some real estate portals around the world that freely give third parties their listings data for free via an API that they've built themselves.

If data scientists out there are going to build models around real estate or if some bright entrepreneur wants to build the next great commute-time property search, these portals would rather they do so using their data and not someone else's.

The age of portals open-sourcing their listings data might be waning though. We reached out to no fewer than five different real estate portals about their use of listings APIs and none of them would talk about the use cases on record.

Ultimately the question of listings data ownership and use is one of those grey areas with more questions than answers. In an age of heightened sensitivity about personal data it does seem curious that the legal data relationship between a property's owner, their agent, the portal and any aggregators that take feeds from the portal seems to be so loosely defined.